Reframing Maritime Artificial Intelligence Through Human Cognitive Load

Maritime digitalization is accelerating. AI-driven predictive maintenance, performance analytics, emissions optimization, and remote monitoring platforms are becoming standard across fleets.

Yet a critical variable remains underexamined:

Does maritime AI reduce operational cognitive load or increase it?

In many deployments, artificial intelligence systems have added dashboards, alerts, reporting layers, and validation requirements without reducing existing workload structures.

The result is not transformation.

It is layered complexity.

This article argues that the future of maritime AI depends not on algorithmic sophistication, but on its ability to reduce watchkeeper burden, integrate into operational culture, and align with real shipboard constraints.

1. The Operational Reality & Life Inside a Watch

Last month, I was speaking with a mid-seniority engineer onboard a large commercial vessel. Fifteen years at sea. Solid machinery instincts. Calm under pressure.

He said something simple:

“We were trained for ships. Not for managing software ecosystems.”

That statement captures the fault line.

A modern watchkeeper today manages:

- PMS/IPMS/IBS/IAMCS/MCS software solution

- Alarm monitoring systems

- Engine performance dashboards

- Fuel optimization tools

- Emissions reporting portals

- Electronic logbooks

- Email instructions from shore

- Remote diagnostic queries

All while operating in:

- High noise environments

- Heat stress

- Fatigue cycles

- Reduced crew complements

- Intermittent connectivity

The physical environment has not become easier.

The digital environment has multiplied.

2. The Cognitive Load Problem

Shipping measures:

- Fuel efficiency

- Engine performance

- CII ratings

- Emissions compliance

- Downtime metrics

But rarely measures:

- Alert frequency per watch

- Dashboard switching frequency

- Duplicate data entry events

- Manual override requirements

- Human interpretation load

AI systems are evaluated on prediction accuracy.

They are rarely evaluated on cognitive impact.

This is a strategic oversight.

Because in safety-critical environments, cognitive overload directly correlates with risk.

3. Alert Fatigue with The Hidden Safety Threat

Traditional machinery alarms already demand prioritization.

Now AI systems add:

- Predictive anomaly alerts

- Efficiency deviations

- Performance optimization suggestions

- Remote shore flags

When every parameter deviation generates an alert, prioritization becomes diluted.

The mid-level engineer I spoke to described it bluntly:

“Sometimes we don’t know which alert actually matters anymore.”

That is not a minor issue.

That is systemic noise.

In aviation, alarm prioritization design is rigorously engineered.

In maritime AI deployment, it is often secondary.

Quantifying the Human-System Imbalance

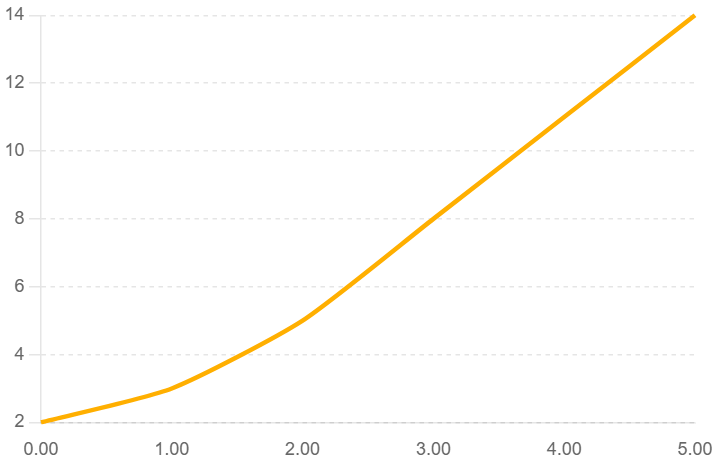

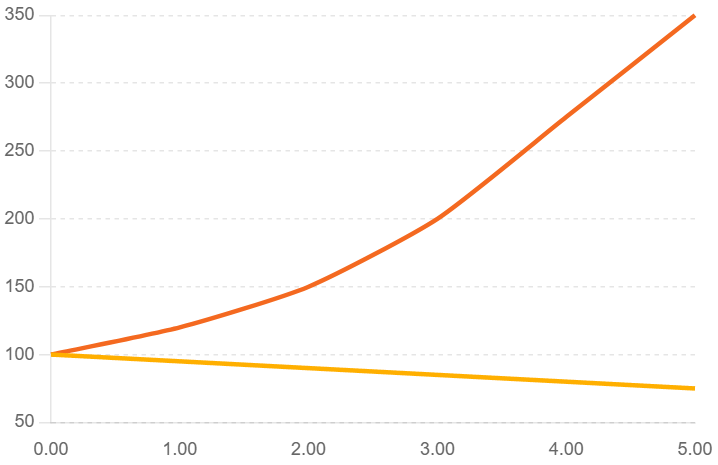

- Digital systems per vessel ↑ 5–7x

- Crew complements ↓ ~20–25% (industry trend)

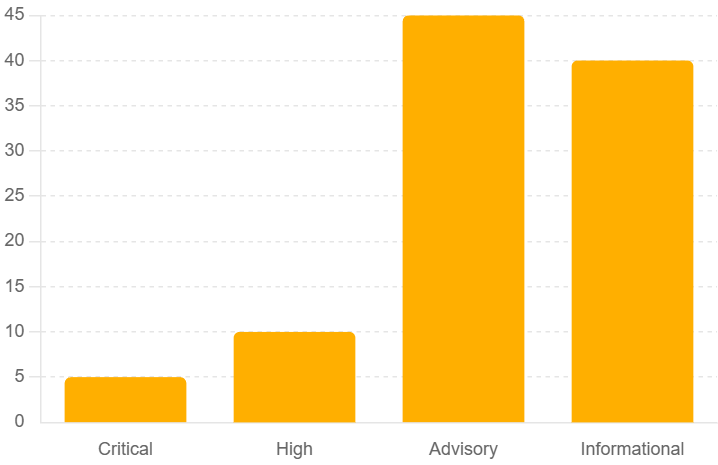

- Alert density dominated by non-critical notifications

- 2000s: PMS + alarm monitoring

- 2010s: Fuel optimization + emissions + E-logbooks

- 2020s: AI predictive maintenance + remote monitoring + ESG dashboards + cyber layers

The operational environment has become digitally denser.

This reflects alarm fatigue patterns reported across safety-critical industries (healthcare, aviation, energy), where:

- 70–90% of alerts are low-priority or informational

- <10% require immediate action

Maritime is now entering the same phase with predictive AI alerts layered on top of traditional alarms.

Key Insight:

When 85% of alerts are advisory or informational, attention becomes diluted.

This is the structural tension.

Over the past decades:

- Crew complements have gradually reduced due to automation and cost optimization.

- Digital system density has increased exponentially.

The gap is widening.

Fewer people.

More systems.

More alerts.

More reporting.

Maritime AI deployment is occurring in an environment where human processing capacity is static or declining while digital signal volume is accelerating.

4. The Training Gap

Maritime academies still focus correctly on:

- Thermodynamics

- Machinery systems

- Navigation

- Safety conventions

- Marine law

But very few institutions integrate:

- Data literacy

- AI interpretation principles

- Model uncertainty

- Human-machine decision frameworks

- Cyber risk awareness at operational level

As a result, onboard personnel encounter AI systems without structured preparation.

The outcomes are predictable:

- Blind trust

- Complete skepticism

- Partial misuse

None of which are optimal.

The industry has introduced advanced digital systems without proportionally evolving training architecture.

5. Software Proliferation Across Fleets

A single vessel may operate 8–12 independent digital platforms.

Across a fleet, the variation multiplies:

- Different vendors

- Different UI logics

- Different authentication systems

- Different data formats

- Different alert structures

There is no unified cognitive architecture.

There is aggregation but not integration.

Adding AI into this environment without consolidation increases fragmentation.

6. Shore vs Ship and The Interpretation Divide

AI systems often empower shore analytics teams more than onboard crew.

Shore sees fleet-wide anomaly detection.

Onboard sees operational nuance:

- Heavy weather adjustments

- Fuel quality variation

- Improvised maintenance

- Aging component behavior

- Manual overrides

When AI flags deviation without context, explanation burden shifts to crew.

One engineer phrased it this way:

“We now spend time explaining data instead of managing machinery.”

If AI increases reporting cycles instead of reducing them, its operational value must be questioned.

7. The Connectivity Illusion

Many maritime AI systems are designed cloud-first.

Ships operate under:

- Limited bandwidth

- Satellite latency

- Data cost constraints

- Periodic desynchronization

If a system requires continuous connectivity to remain reliable, trust degrades quickly at sea.

Maritime AI shall prioritize:

- Edge processing

- Offline resilience

- Minimal transmission architecture

- Local interpretability

Otherwise, it introduces friction.

8. Human Factors Engineering and The Missing Discipline

Maritime systems engineering is highly developed.

Human factors engineering in digital maritime systems is not.

Questions rarely asked:

- Does this system reduce decision time?

- Does it simplify interpretation?

- Does it align with watch cycles?

- Does it account for fatigue patterns?

- Does it reduce screen switching?

If AI adds additional layers without subtracting existing ones, it increases net workload.

Innovation should reduce layers.

9. Compliance-Driven vs Performance-Driven AI

A significant portion of maritime AI adoption is regulatory-driven:

- Emissions monitoring

- ESG reporting

- Carbon intensity tracking

- Data transparency requirements

Compliance is necessary.

But compliance-driven systems often prioritize reporting accuracy over operational simplicity.

The result is additional data input obligations onboard.

AI becomes a reporting instrument rather than a performance enabler.

10. From Dashboard to Decision

True maritime AI should aim to:

- Consolidate interfaces

- Suppress non-critical alerts

- Prioritize actionable deviations

- Explain predictions transparently

- Adapt to vessel-specific baselines

- Reduce manual reporting

If it does not reduce:

- Screen time

- Alert count

- Interpretation complexity

- Reporting workload

It is not operationally transformative.

It is additive.

11. The Cultural Mismatch

Software culture:

- Rapid iteration

- Continuous deployment

- Version updates

Maritime culture:

- Stability

- Predictability

- Tested redundancy

- Operational consistency

An AI system that changes interface logic frequently may improve technically but erode operational trust.

Maritime AI must respect the culture it enters.

12. The Path Forward

A. Measure Cognitive Load

Before and after digital deployment.

B. Integrate AI Literacy into Maritime Curriculum

From cadet level onward.

C. Reduce Platform Multiplicity

Consolidate before adding.

D. Design for Edge and Offline Operation

Not cloud dependency.

E. Include Watchkeepers in System Architecture Design

Co-design reduces resistance and improves usability.

13. Policy & Strategic Recommendations

To stop artificial intelligence from causing confusion, maritime stakeholders like regulators, shipowners, classification societies, training institutions, and technology providers need to use clear governance frameworks.

The following recommendations provide a strategic roadmap.

a. Establish Cognitive Load Impact Assessments (CLIA) Before Deployment

Policy Proposal:

Mandate structured Cognitive Load Impact Assessments prior to large-scale AI system deployment onboard vessels.

Rationale:

Current digital deployments are evaluated based on:

- Technical capability

- Predictive accuracy

- Compliance coverage

They are not evaluated based on:

- Alert volume increase

- Screen interaction complexity

- Manual intervention frequency

- Watchkeeper workload impact

Implementation:

- Pre- and post-deployment workload benchmarking

- Alert frequency audits

- Human-system interaction mapping

- Crew feedback loops integrated into acceptance testing

AI should only scale fleet-wide after measurable reduction in operational friction.

b. Integrate AI & Digital Systems Literacy into Maritime Education

Policy Proposal:

Update maritime academy curricula to include:

- AI fundamentals for operators

- Data interpretation principles

- Model uncertainty and probabilistic reasoning

- Human-machine decision frameworks

- Cyber hygiene in AI-enabled vessels

Rationale:

Crew are trained for mechanical systems, not algorithmic interpretation.

Without digital literacy:

- AI recommendations are misused

- Trust collapses

- Operational tension increases

Strategic Outcome:

Create digitally fluent officers capable of:

- Questioning AI outputs

- Validating predictions

- Escalating anomalies intelligently

This reduces blind reliance and blind resistance.

c. Standardize Alert Prioritization Frameworks Across Systems

Policy Proposal:

Develop an industry-wide AI Alert Classification Standard aligned with machinery alarm hierarchies.

Current Problem:

Each vendor defines alert logic independently.

Result:

- Duplicate notifications

- Overlapping priority levels

- Inconsistent escalation pathways

Recommended Model:

Tiered alert architecture:

- Critical (Immediate Safety Risk)

- High (Operational Degradation Risk)

- Advisory (Performance Optimization)

- Informational (Trend Awareness)

AI alerts must not bypass established alarm protocols.

They must integrate into them.

d. Mandate Edge-Capable and Offline-Resilient Design

Policy Proposal:

Classification societies and flag administrations should require:

- Offline operational capability

- Local model processing where feasible

- Reduced bandwidth dependency

Rationale:

Cloud-dependent AI undermines reliability in low-connectivity conditions.

Operational integrity at sea requires:

- Predictability

- Latency resilience

- Local decision support continuity

Maritime AI must be engineered for saltwater constraints, not corporate broadband.

e. Require Crew Inclusion in AI System Architecture Review

Policy Proposal:

Make onboard operational feedback a formal part of AI procurement and renewal contracts.

Implementation:

- Include watchkeepers in pilot programs

- Conduct usability stress tests under real watch cycles

- Integrate human feedback into software update cycles

Rationale:

Systems designed without operational input create friction.

Inclusion builds ownership.

Ownership builds trust.

f. Consolidate Platform Architecture Before Adding AI Layers

Policy Proposal:

Encourage fleet-level digital consolidation audits before introducing new AI systems.

Current Situation:

Vessels may operate 8–12 digital systems independently.

Adding AI on top multiplies fragmentation.

Recommended Action:

- Identify redundant dashboards

- Merge reporting pipelines

- Centralize authentication structures

- Reduce interface switching

AI must simplify architecture, not complicate it.

g. Introduce AI Governance Frameworks for Maritime Operations

Policy Proposal:

Adopt structured AI governance principles tailored to maritime environments, including:

- Transparency in model logic

- Clear accountability pathways

- Defined override authority

- Auditability of AI decisions

Rationale:

When AI influences maintenance, routing, or machinery decisions, accountability must remain clear.

Operational command structures must not be blurred by algorithmic ambiguity.

h. Shift Evaluation Metrics from Prediction Accuracy to Operational Impact

Policy Proposal:

Redefine success criteria for maritime AI.

Move from:

- Model accuracy percentages

- Data processing speed

- Dashboard sophistication

To:

- Reduced alert volume

- Reduced manual reporting hours

- Reduced cognitive friction

- Improved decision clarity

- Increased crew confidence

If the human workload remains constant or increases, the system has failed operationally regardless of algorithm quality.

i. Develop Maritime Human-Machine Interface Standards

Maritime digital systems should incorporate structured human factors engineering similar to aviation.

This includes:

- Fatigue-aware interface design

- Watch-cycle adaptive notification models

- Noise-resistant alert logic

- Visual hierarchy consistency

The bridge and engine control room are not corporate offices.

Design principles must reflect that.

j. Establish a “Human-Centric AI Certification” Pathway

Forward-looking regulatory bodies and classification societies could introduce certification frameworks that evaluate:

- Human-machine alignment

- Alert architecture integrity

- Cognitive load reduction metrics

- Edge resilience

- Operational transparency

This would incentivize vendors to compete on usability and integration not only predictive claims.

14. Innovation or Noise?

Maritime AI will not fail because of poor algorithms.

It will fail because of poor alignment with human systems.

At sea:

Clarity equals safety.

If artificial intelligence increases:

- Alert frequency

- Screen complexity

- Reporting cycles

- Explanation burden

Then it is not intelligence.

It is noise.

The next generation of maritime intelligence systems must not simply be AI-powered.

They must be human-aligned.

Because the future of shipping will not be decided by code alone.

It will be decided by whether the person on watch feels supported or overwhelmed.

Leave a comment