Recently, I had a detailed conversation on AI, its implications, and what actually works in maritime with Joachim Rosenegger.

Joachim’s perspective is grounded in implementation, not theory. His work sits close to where maritime technology either survives contact with reality or quietly fails. Retrofit constraints, compliance workflows, cybersecurity exposure, operational friction. Not ideas. Outcomes.

That shaped the conversation from the start.

This wasn’t about what AI can do but it was about what actually holds up when decisions carry financial, regulatory, and operational consequences and very quickly, the discussion moved away from AI itself into how shipping actually makes decisions.

The Conversation Didn’t Start With AI

It began, as these discussions often do, with a familiar premise artificial intelligence in maritime. Its promise. Its trajectory. Its growing presence across systems that claim to optimise, predict, and guide. But the conversation did not stay there for long. Across the table, Joachim Rosenegger steered it in a different direction. Not toward capability, but toward consequence. Not toward what systems are designed to do, but what actually happens once they are deployed. The subject shifted, almost quietly, from AI to failure.

Not the kind that makes headlines cancelled pilots, overspent budgets, or abandoned rollouts. Those are visible, documented, and often explained away.This was about a different category of failure.

Operational failure.

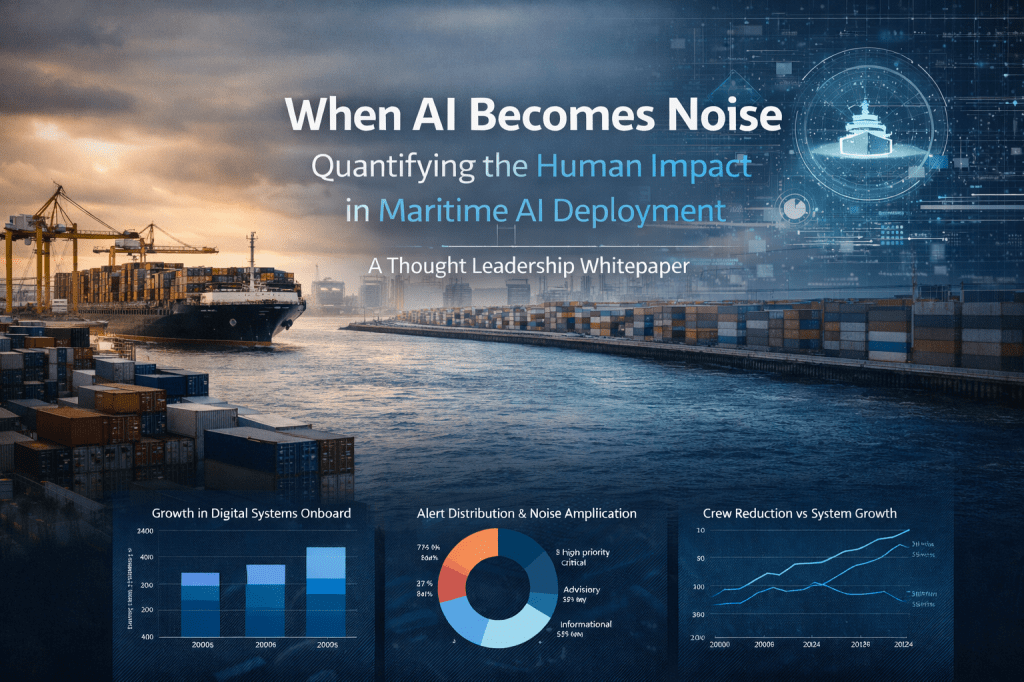

The kind that settles in after implementation. When systems are live, data streams are active, dashboards are populated and yet, the quality of decisions remains unchanged. Or worse, becomes harder to justify. There was no disagreement here. If anything, there was immediate alignment. AI in shipping does not collapse at the idea stage. It rarely fails because of a lack of ambition or investment. It fails, more predictably, at two points that receive far less attention. First, at data integrity. Second, at decision trust. Everything else the sophistication of models, the elegance of interfaces, the scale of deployment comes later, if at all.

That framing changes the discussion. Because it forces a different set of questions. If systems are generating insights, why are experienced operators still relying on instinct?

Why are outputs questioned, reinterpreted, or quietly set aside?

Why does the final decision still sit firmly with the individual, even when supported by layers of analytics?

The answer, as it emerged through the conversation, was not technical. Most systems, it seemed, stop just short of where they are needed most.

They inform.

They highlight.

They suggest.

But they do not provide the structure required to support a decision that must be explained, defended, and, if necessary, audited. And in shipping, that distinction carries weight. Decisions are not abstract. They are tied to cost, compliance, contractual exposure, and, in some cases, safety. They do not end when they are made. They are revisited, questioned, and sometimes challenged long after the moment has passed. In that environment, the issue is no longer whether AI can produce an answer. It is whether that answer can withstand scrutiny. That is where the conversation settled. Not on what AI can do but on what it must become to matter.

The Data Illusion

From there, the discussion moved into what is often described as the foundation of AI data. At first glance, this is where most explanations stop. The industry narrative is familiar: more data, better systems, improved decisions. But the reality inside shipping looks very different. There is no shortage of data.

Engine logs are recorded continuously.

Noon reports capture voyage conditions.

Charterparty clauses define commercial exposure.

Maintenance histories, claims records, fuel consumption, compliance metrics each exists, often in detail.

The volume is not the constraint but the structure is. Each dataset is created for a specific purpose, within a specific context, and often by a different part of the organisation. Technical data serves engineering needs. Commercial data supports chartering decisions. Compliance records exist to satisfy regulatory requirements. They are not designed to work together. As a result, when these datasets are brought into an AI environment, they do not form a system. They remain fragments.

A maintenance log does not explain its commercial implication.

A voyage report does not align with regulatory exposure.

A charter clause does not reflect technical risk.

To a human operator, these connections can be inferred. Experience fills the gaps. To a system, those relationships do not exist unless they are explicitly defined. That is where a critical assumption begins to break down. There is a quiet belief across the industry that if data exists, AI will understand it. Operationally, this is not true.

A scanned manual may contain valuable knowledge, but it is not structured data.

A spreadsheet with inconsistent labels cannot be reliably compared.

A diagram does not translate into machine logic.

Without defined relationships, context, and intent, a system does not interpret data it retrieves it. And retrieval is not understanding. This distinction matters more in shipping than in most industries, because decisions rarely depend on a single dataset. They emerge from the interaction between many. When those interactions are not modelled, the system produces information.

But it misses meaning. That is where the illusion begins to show. The industry does not lack data. It lacks usable structure.

Conclusion — Where This Actually Leaves Us

By the time the conversation closed, the focus had shifted completely.

Not toward better models.

Not toward more data.

Not toward faster systems.

But toward something far more fundamental. Structure. Because what became clear through each layer of the discussionis this:

Shipping does not fail because individual decisions are wrong.

It fails because the interaction between decisions is not understood in time.

A technically correct choice can still create commercial loss.

A commercially sound decision can still introduce regulatory exposure.

A compliant action can still weaken operational resilience.

These are not isolated errors. They are system-level blind spots. And most current AI systems, despite their sophistication, are not built to address that level of interaction.

They optimise functions. But shipping is not a function-driven environment.

It is a constraint-driven system, where every decision sits inside multiple overlapping pressures—financial, regulatory, technical, and operational.

Interestingly, many of these same themes are echoed in a recent piece on Maritime Innovations.

The argument there is direct:

AI in maritime must earn its place at the decision table—not through capability, but through trust, traceability, and defensibility.

That alignment is not coincidental.

It reflects a broader shift.

Away from:

- dashboards

- isolated predictions

- feature-heavy systems

And toward:

- decision accountability

- structured logic

- systems that can show their work

This is where the real opportunity sits.

Not in building smarter tools.

But in building decision architectures that:

- connect fragmented realities

- expose assumptions clearly

- separate fact from inference

- and allow operators to stand behind outcomes with confidence

Because in shipping, decisions are not judged at the moment they are made.

They are judged later

under scrutiny,

under pressure,

and often with consequences attached.

That is the standard AI must meet.

Not intelligence.

Not speed.

But defensibility.

And until systems are built for that,

AI in maritime will continue to look impressive in demonstration

and remain limited in real operations.

The question, then, is no longer:

What can AI do for shipping?

It is:

Can it support a decision that someone is willing to defend?

That is where this conversation ultimately led.

And that is where the industry now needs to focus.

Read the full article https://maritime-innovations.com/

Leave a comment